Chrome in the Cloud, at Scale: Kernel’s Blueprint for Production Agents (YC S25)

Browsers as reliable infrastructure. The economics of a browser runtime. The people who obsess over latency.

Kernel is a cloud runtime that gives AI agents secure, low-latency real browsers (plus durable authenticated sessions) to operate on the web at scale. Kernel sells reliable agency, the ability to take actions on websites with production-grade speed, state, and identity guarantees. The mission is to make it cheap and safe for sanctioned agents to do the unglamorous work of the internet. Log in, click, download, verify, transact, without every team reinventing browser ops.

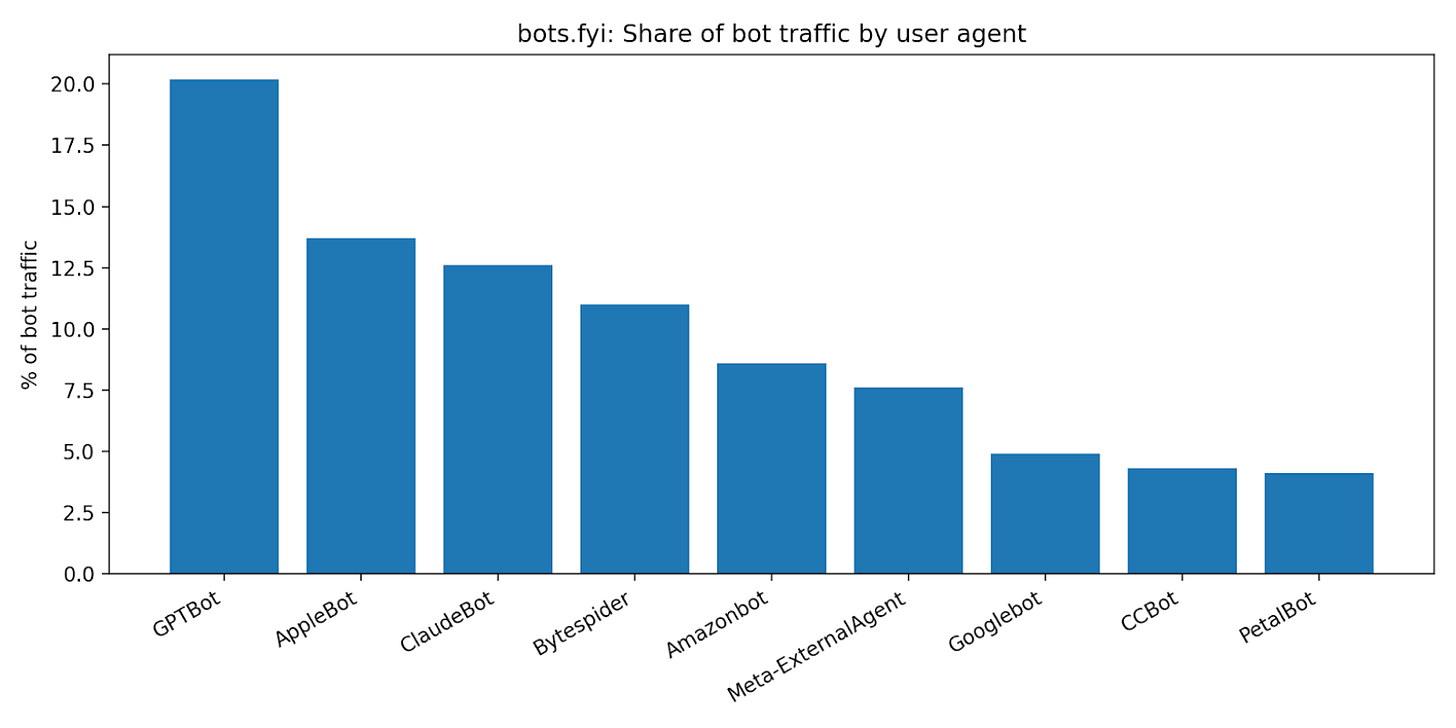

The Web Is Hostile to Automation

Kernel’s customer is anyone shipping production browser automations, which increasingly are AI agents. Their pain is that the web is messy. No APIs, volatile DOMs, bot defenses, logins, captchas, and rate limits. Running a browser fleet turns into a second job. Cold starts, flaky sessions, observability, and incident response.

Today’s options cluster into four buckets:

DIY containers/VMs (Playwright + Kubernetes): maximal control, maximal ops burden; cold starts and state reuse are hard.

Headless-browser clouds (Browserbase, Browserless, Steel): faster to start, but costs can spike (minimum billing, proxy markups) and “session as durable state” is not free.

RPA suites (UiPath, etc.): friendly UI, but heavy, expensive, and often not built for agentic scale or developer velocity.

Scraping stacks (proxies + anti-bot + scrapers): good for extraction, weaker for multi-step authenticated workflows.

The shortcomings are mostly second-order. Once a workflow matters, you need durable identity, auditability, and predictable unit economics, exactly where ad-hoc scripts die.

Browsers as Reliable Infrastructure

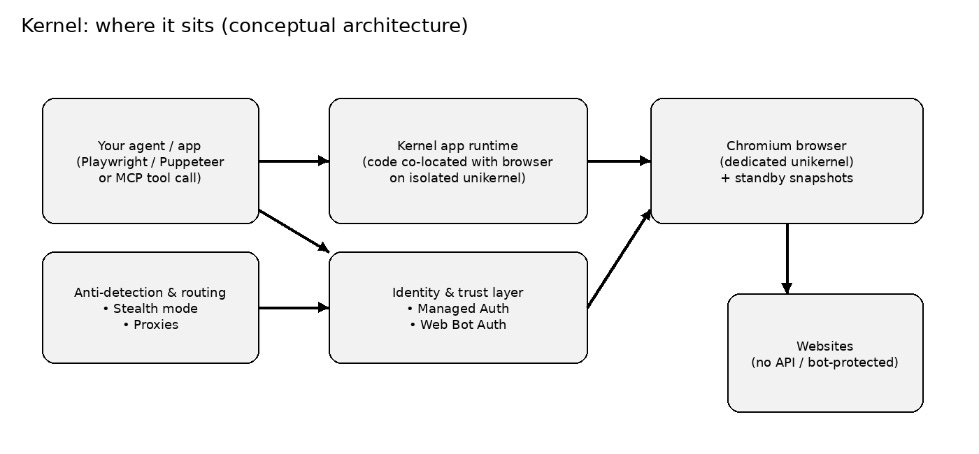

Browser automation is stateful compute. They run browsers on unikernels with standby + snapshotting: you don’t pay much to keep a session alive, and you don’t wait seconds to boot a new browser.

Kernel’s core promise is real-browser capability with production economics:

very low cold starts (claimed <20ms for browsers)

persistence as a first-class primitive (standby snapshots)

agent-oriented identity & auth (Managed Auth; Web Bot Auth)

debugging ergonomics (live view, replays) that make operations tolerable.

“The reason to build Kernel has always been clear.

LLMs can automate nearly any workflow on the internet, but browsers — the critical interface to that work — are fragile, hard to scale, and expensive to run. Kernel solves this. We provide browsers-as-a-service so AI agents can use the internet the same way people do. Our edge is our ability to deliver reliable browsers that spin up in milliseconds, persist state across workflows, and gracefully support human-in-the-loop interactions.

…As part of this announcement, I’m excited to share Kernel Agent Authentication — an identity and permissions layer that lets developers safely authorize agents to take actions on real user accounts, with full auditability and scope control.”

Durability hinges on whether Kernel can turn infra advantages into a moat. Two plausible moats:

Systems moat: unikernel snapshotting, scheduling, observability, and anti-detection is hard to replicate well.

Trust moat: if agent identity standards (Web Bot Auth) and Managed Auth become table stakes, Kernel’s early partnerships + integrations can lock in workflows.

Kernel positions Web Bot Auth as an emerging standard and says it partnered with Vercel to support it (identity for agents).

Kernel is already inching up the stack in two directions:

Workflow surface area: MCP server + app runtime so agents can call Kernel as a tool, not just a browser endpoint.

Capability surface area: file I/O, auth orchestration, identity proofs, and (likely) more primitives that turn the browser into an agent operating environment.

Concrete ROI shows up as: faster automation (latency), less ops (no browser fleet), and fewer auth fires:

Rye: saved $240K/year in engineering costs and 2x better performance than any other provider

Prompting Company: 50,000+ browsers/mo.

Silkline: reducing checkout effort… by 80% and 6 weeks… to only 10 minutes.

Kernel sits between your agent code and the hostile web:

Your agent (Playwright/Puppeteer or MCP) calls Kernel.

Kernel runs your code and/or gives you a browser endpoint.

The browser runs in an isolated unikernel with standby snapshots.

Optional layers: proxies/stealth, Managed Auth, Web Bot Auth signing.

Representative use cases:

Agentic commerce: complete checkout flows across arbitrary merchants (Rye).

Product discovery/SEO for LLMs: seeing what real users see (Prompting Company).

Manufacturing procurement: supplier integration and COTS checkout workflows (Silkline).

Portal automation with file handling: download/upload PDFs, CSVs in regulated workflows (insurance/healthcare portals) via File I/O.

Bot-friendly identity: sign requests so sites can verify your agent (Web Bot Auth).

Why This Didn’t Exist Five Years Ago

Aagents are finally practical, and the web is the largest unstructured API. Once LLMs can plan and see, the limiting reagent becomes infrastructure. Browsers, state, identity, and compliance.

The full solution required multiple moving parts to be mature at once:

browser automation stacks (Selenium→Playwright) had to stabilize

cheap cloud compute + networking had to get good enough

unikernel toolchains and snapshotting needed production-grade paths

the demand signal (agentic workflows) had to be large enough to justify purpose-built infra.

A short lineage:

2004: Selenium popularizes browser automation for testing.

2017+: Chrome DevTools Protocol becomes the core remote control interface for modern browsers.

2020: Playwright brings cross-browser automation ergonomics.

2024–2026: LLM agents move from toy demos to production workflows, which forces infra to care about state and identity beyond page renders.

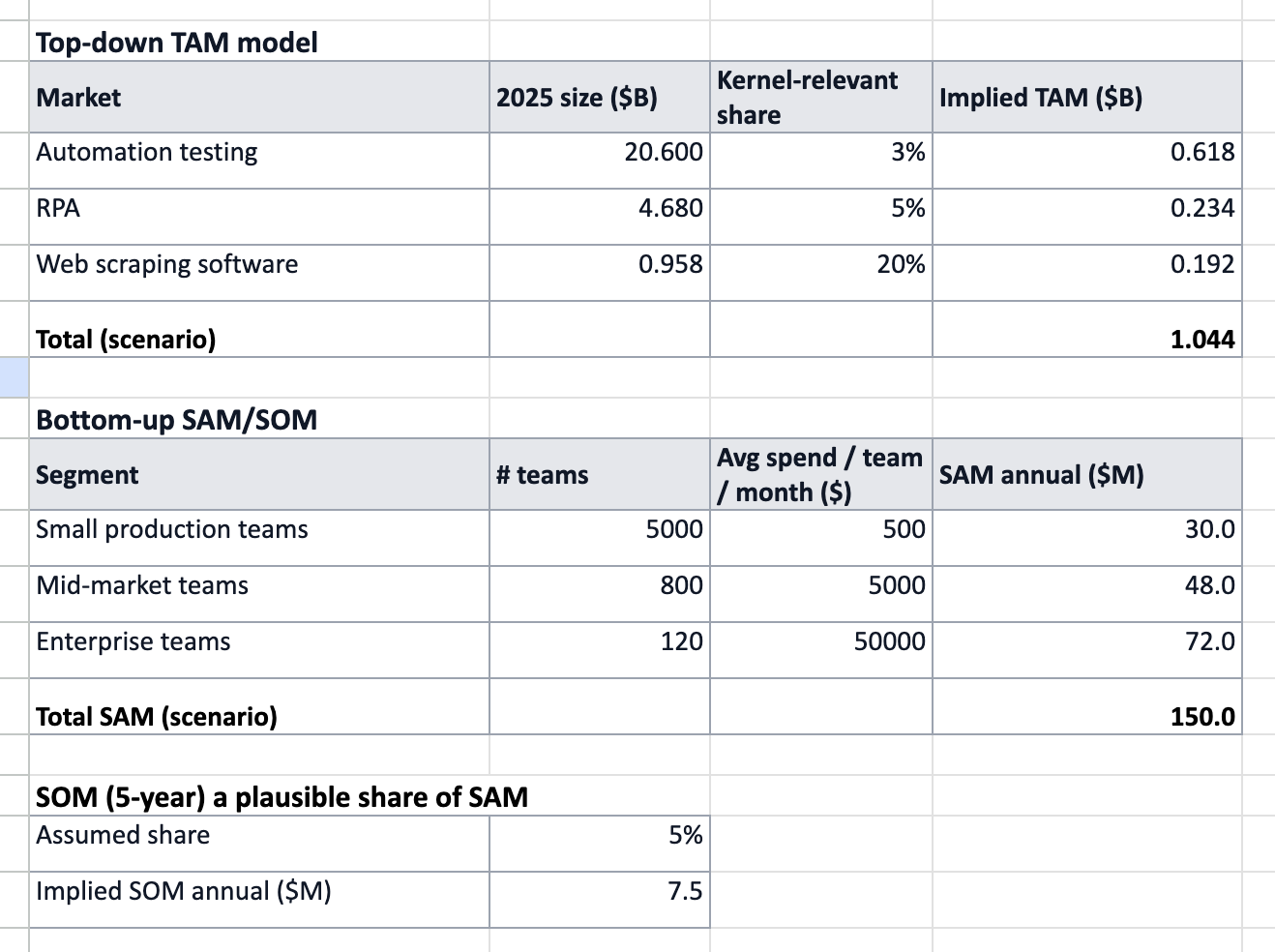

TAM: Every Workflow with a Login

Kernel’s customer is the builder/operator of browser automations: agent startups, platform teams, data teams, and RPA-like internal automation groups. The market is browser execution + identity + anti-bot as a cloud utility.

The company is trying to carve a specific wedge. Agent-native browser infrastructure, not generic scraping, not generic testing. The new market is the intersection of LLM agents, web governance, and stateful sessions as durable infrastructure.

Kernel’s ICP looks like:

ships an agent that touches many websites

needs session persistence (logins, cookies) and debuggability (live view)

is cost-sensitive at scale (50k+ browser spins)

cares about legitimacy/trust (bot identity) and sometimes compliance (SOC2 claims).

Market sizing here is a little like trying to measure fog with a ruler: the category is real, but its edges are still moving. So instead of one heroic TAM number, we triangulate Kernel’s opportunity with two independent models and a sanity check.

Top‑down TAM: start with adjacent spend pools that already pay for browser automation (automation testing, RPA, web scraping) and apply a conservative Kernel‑relevant share that maps to paid cloud browser execution + identity/stealth + session persistence. This avoids the lazy move of calling the internet the TAM.

Bottom‑up SAM: count the teams running production web agents/automations and multiply by an expected monthly spend. We anchor spend to Kernel’s usage‑based pricing (active browser time) and public customer utilization (e.g., 50k+ browsers/month in one account). Segments are intentionally coarse; the knobs (team counts + $/team/month) are exposed so you can update them as new evidence arrives.

SOM (5‑year): choose a share of SAM that could plausibly be won given competition, sales motion, and product depth. A good SOM feels slightly uncomfortable, not “we’ll win 1% of the world,” but “we’ll win X% of the teams who truly need this.” Even a small market can compound if Kernel becomes a default utility with high retention, usage expansion, and pricing power.

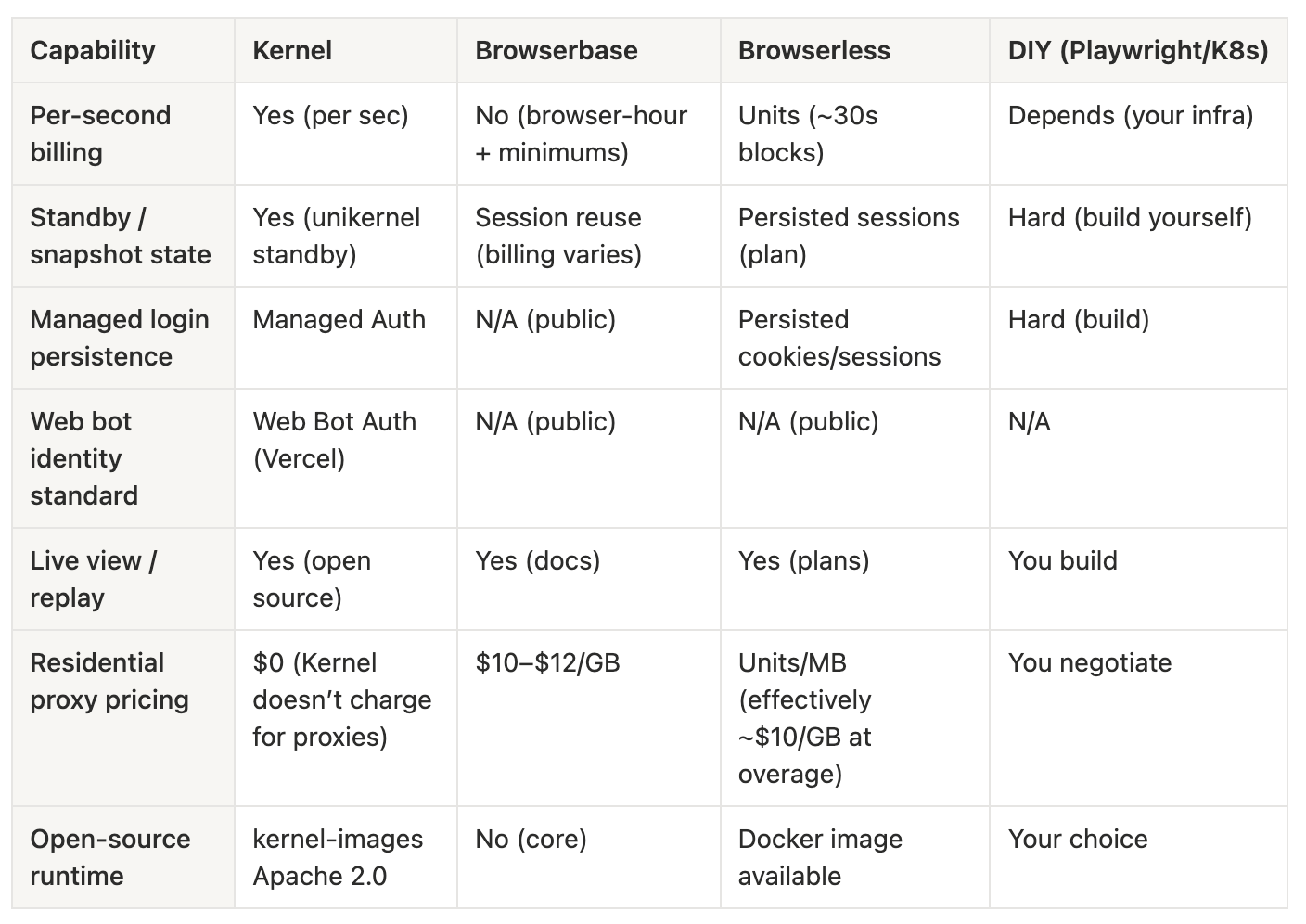

The Browser-Infra Knife Fight

Direct: Browserbase, Browserless, Steel (browser clouds). Browserbase and Browserless are explicit browsers-as-a-service’peers with usage-based plans and concurrency tiers.

Indirect: in-house Playwright fleets; RPA suites; scraping networks that bundle browsers; LLM agent frameworks that might vertically integrate browsers.

Also, hyperscalers could productize managed browsers as a primitive.

Kernel’s stated plan is to be faster, be cheaper, and be more legitimate:

Faster via unikernels + standby snapshots.

Cheaper via per-second billing and not charging for idle time, plus inexpensive residential proxies.

More legitimate via Web Bot Auth and agent identity features. The positioning: sanctioned agents, auditable behavior.

Kerne’s advantages:

Standby snapshots (state reuse without paying full price for persistence).

Low cold starts.

Developer ergonomics: live view, replays, CLI.

Agent identity: Managed Auth, Web Bot Auth.

Pricing: headless is cheap per hour; residential proxy pricing undercuts typical $10/GB pricing.

Open-source core (kernel-images under Apache 2.0).

The risks: features converge; anti-bot remains an arms race; distribution matters.

What You Actually Buy (and Why It’s Sticky)

Kernel’s product surface includes:

Browsers-as-a-service: managed Chromium sessions that Playwright/Puppeteer can connect to.

Live view + replays for debugging and human-in-the-loop control.

Browser Pools + Standby for session reuse economics.

Profiles (cookie/session isolation), extensions, file I/O.

Managed Auth (login orchestration + durable authenticated sessions).

Web Bot Auth (cryptographic bot identity support with Vercel).

App runtime: deploy code to a serverless environment with browser access.

MCP server: expose Kernel browsers as tools for LLM agents.

Know-how: unikernel-based runtime, standby snapshotting, and scheduling/observability are the hard parts that aren’t visible from the API, but are where performance/cost advantages likely live.

The Economics of a Browser Runtime

Kernel’s path to thriving is to become a utility. The default place agents go when they need a browser that’s fast, stateful, and legitimate. In infra terms: win the execution layer and then expand into identity, auth, and workflow primitives. The ambition is to be the OS-level infrastructure for sanctioned agents interacting with the web.

The revenue model is a hybrid of subscription + usage:

Monthly platform fee (free / $30 / $200 / enterprise custom) includes credits.

Usage metered per second of browser time, plus (for browser pools) small disk charges while idle.

Classic cloud utility economics where revenue scales with customer workload.

Published usage rates:

Headless: $0.0000166667/sec (~$0.06/hr)

Headful: $0.0001333336/sec (~$0.48/hr)

Plans:

Developer (free + usage) includes $5 credits/mo

Hobbyist $30/mo + usage (credits $10)

Start-Up $200/mo + usage (credits $50)

Enterprise custom; HIPAA BAA listed as available for Enterprise.

Concurrency (on-demand): 5/10/50/custom.

The sales & distribution looks like PLG (self-serve plans, open-source runtime, CLI, MCP), plus ecosystem partnerships (Vercel integrations; bot identity standards). Enterprise features (HIPAA BAA, shared Slack support) imply a sales-assisted motion at the top end.

The People Who Obsess Over Latency

Kernel is led by co-founders Catherine Jue (CEO) and Rafael “Raf” Garcia (CTO). The founding story (per Raf) is: he spent years building platform tooling at Clever, learned that opinionated infrastructure can turn a small team into a platform, then applied that lesson to agentic web automation. Kernel’s early team also includes founding engineers Mason Williams and Fumihiro Tamada. The public changelog and frequent product posts suggest a culture of fast iteration and documentation-as-product.

Financials

Kernel announced $22M in Seed + Series A led by Accel in October 2025.

From Browsers → Trust Infrastructure for Agents

The optimistic five-year outcome is that Kernel becomes the standard execution + identity layer for sanctioned web agents, the cloud operating system where agents run browsers, hold long-lived authenticated state, prove who they are, and expose auditable logs so websites can accept them.

The endgame is pricing power via indispensability. If Kernel becomes the default agent compliance + browser runtime layer, it can charge for reliability and trust, beyond just compute seconds.

The moat is data + iteration speed: every edge case (bot defenses, auth flows) is data; the better the system, the more workloads it attracts, which creates more data and tooling improvements.